ICYMI, all GitHub Copilot for Individuals users now have access to GitHub Copilot Chat beta!

How I used GitHub Copilot Chat to build a ReactJS gallery prototype

GitHub Copilot Chat can help developers create prototypes, understand code, make UI changes, troubleshoot errors, make code more accessible, and generate unit tests.

Ever since we announced GitHub Copilot Chat in March this year, I’ve been thinking a lot about how it’s improving developer happiness and overall satisfaction while coding. Especially for junior developers looking to upskill, or those in the learning phase of diving into a new framework, GitHub Copilot Chat can be such a valuable tool to have in your back pocket.

The capabilities of GitHub Copilot Chat

With GitHub Copilot Chat, you can now interface with Copilot as a context-aware conversational assistant right in the IDE, allowing you to execute some of the most complex tasks with simple prompts. This goes beyond GitHub Copilot’s original capabilities, which focused on autocompletion and translating natural language comments into code. Now, developers can not only get code suggestions in-line, but they can ask Copilot questions directly, get explanations, offer prompts for code, and more, all while staying in the IDE—and in the flow.

Navigating a new framework (and saving time)

Recently, I was preparing a conference talk and demo about ReactJS, and I had to think a bit about what kind of app I wanted to make with the help of Copilot Chat. Since photography is a hobby of mine, I decided to make a photo gallery of the tulip fields and flower shows around Amsterdam. In the end, I went through a couple different versions of this photo gallery with Copilot Chat. Using a probabilistic model, which is currently based on OpenAI’s GPT-3.5-turbo, it found the best suggestion for me based on how I prompted it, including the question I asked, the code I’d started writing, and other open tabs in my IDE.

It had been a long time since I had used React, so it probably would’ve taken me a few days of searching and trial and error before coming up with something decent. But with Copilot Chat, each iteration of my photo gallery only took me about 20-30 minutes to go through.

Making prototypes and generating new code

What I most enjoyed about using Copilot Chat to create something new was discovering multiple ways I could implement my component. I didn’t have to leave my IDE and search for advice or a component to use because Copilot would suggest something in real time. If it offered me a suggestion that didn’t work out well, I could give it feedback on why that suggestion didn’t work, which enabled it to offer suggestions that better suited my needs.

Despite working in an unfamiliar framework, Copilot Chat enabled me to immediately start churning out my ideas, which was incredibly satisfying. It was empowering to discover that I can get something done so much faster than what I would have anticipated without any help.

This idea of looking for external help and examples to understand code has been part of the learning process since well before we had AI pair programming tools. I remember when I was first starting in my career and discovering all these new frameworks. I would spend hours, days, weeks doing tutorials and learning about different ways of implementing things. I would learn by copying and pasting things I saw on StackOverflow and seeing how they fit in with the rest of my code (or by chatting with my buddy that I shared a cubicle with at the time).

A lot of the time, these code snippets didn’t even work, but having something to start with really helped with the learning process and that excitement propelled me forward to the next step. This is exactly the magic I felt when using Copilot Chat—while being able to get a contextual suggestion that actually worked and helped me quickly progress to the next thing. Not to mention the amount of time and energy I saved by staying within the context of VS Code instead of searching through websites and other comments online (and avoiding some stress caused by the sentiment of some Stack Overflow comments).

GitHub Copilot Chat in action

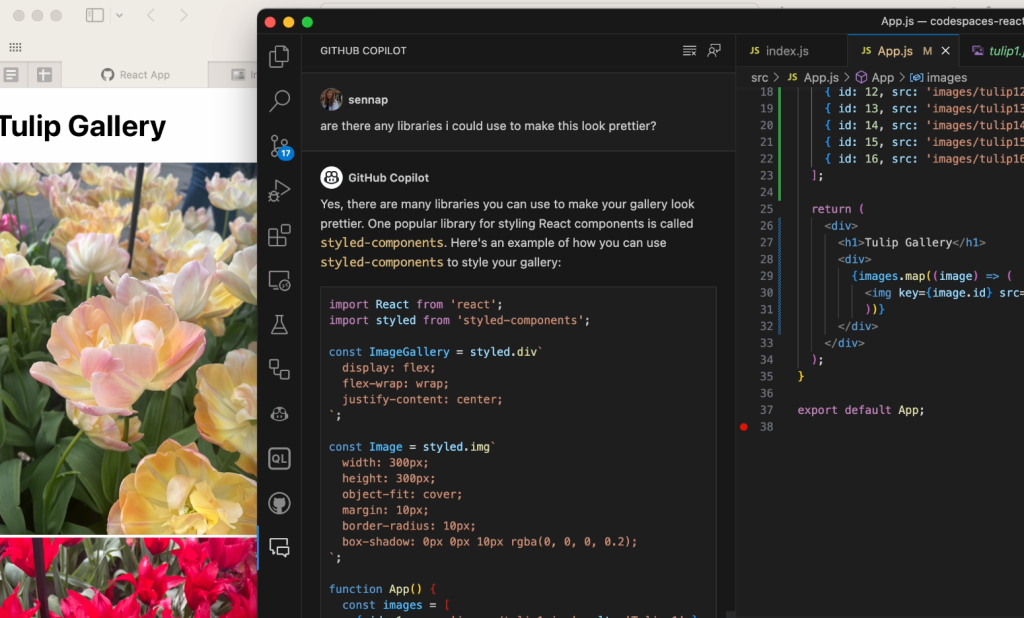

When it came time to build my photo gallery, I used Copilot Chat to get suggestions for popular React libraries I could use. There were a few of them that I checked out in separate iterations of the gallery but styled components seems to be the easiest one for me to configure.

I wanted to include a modal as well, so I asked Copilot if the styled components library supported modals. I was really surprised that it knew exactly how to utilize the modal component of the library and how to pass the props in and handle the onClick functionality from the get-go.

In the video, you may notice that it initially gives me a generic suggestion with some boilerplate examples of how to define a modal component and how to reference it from another file. I then asked it to iterate on that suggestion and give me something more specific to how I defined my gallery. This is important because the power of GitHub Copilot is really in the prompt that you provide it: the more fine-tuned the information, the more powerful its suggestions will be. For further reading, check out these prompt tips and tricks for leveraging GitHub Copilot effectively as well as this post on how we compose prompts at GitHub.

Testing out a UI change based on a natural language prompt

When I first tried rendering a modal, that close button was out of view on the top right corner of the screen. This isn’t too difficult to do if you’re regularly developing front-end. Full transparency: I would have needed to Google how to fix this since I just don’t remember how to and CSS is hard! I was shocked that just by asking Copilot Chat to center the “X” button in the modal, it gave me a better suggestion with some new CSS to add display properties to the button that adjust it to my intention. With Copilot Chat, I got the fix I needed without having to leave the IDE or break my flow.

Making accessibility improvements

I have a background in web accessibility and I knew there would be some improvements needed to make the modals interactive with proper focus handling. There are many facets to making a component accessible and it’s important to strategize early on. Best practices include working with accessibility linting tools, and also specialists that can help you balance constraints at the start of the design and development process.

Copilot Chat can be a great addition to those tools by pointing you in the right direction to fixing accessibility issues. In the case of my gallery, the images were not presenting themselves as interactive to keyboard or screen reader users (or, even visually, which goes to show that accessibility makes products better for everyone!). I asked Copilot Chat what it recommended for me to improve the interactivity of the images. The video below illustrates the suggestions it provided around using tabindex, aria attributes, and handling keydown events.

There are, of course, other accessibility considerations to be made here. At some point I decided to make each of the images button elements with a background image, since generally it’s better to use semantic HTML. I then carried on with the rest of my work to manage the focus correctly when opening and closing the modal, as well as making sure only the visible or focused content is presented to a screen reader.

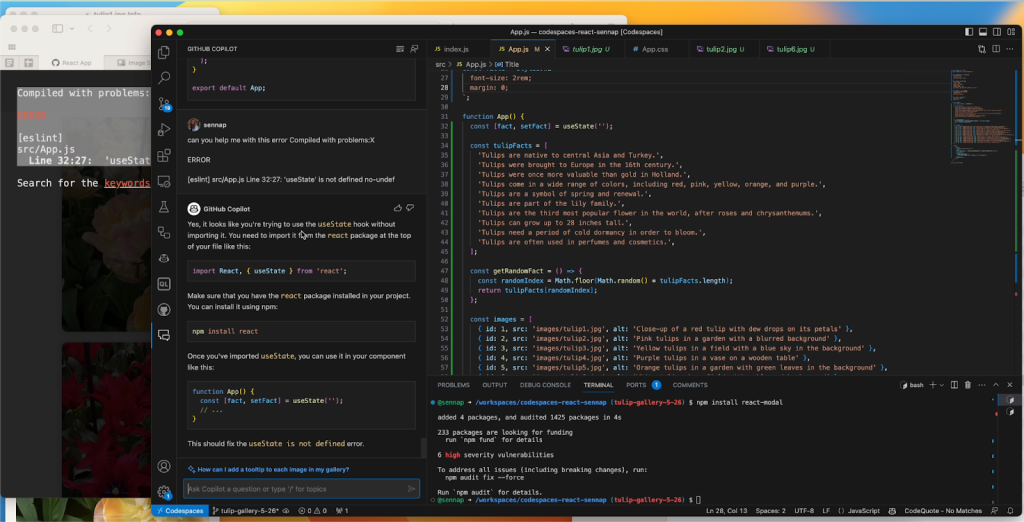

Troubleshooting errors

I was also surprised by Copilot Chat’s ability to help me debug my project whenever I came across an error message. I’d just paste the error into the chat window and GitHub Copilot would offer an explanation for what went wrong and an alternative approach so I could fix the bug quickly and move on.

Writing tests

Knowing that GitHub Copilot can suggest bug fixes, I also wanted to see how it would suggest I write tests for my code. You can ask Copilot Chat for all sorts of test cases, as well as just what kind of testing framework would make the most sense for your application.

In another iteration of my gallery, I used GitHub Copilot to help me render a countdown to the next opening of Tulip season (I went with March 21, 2024, when the tulip festival starts). I decided to make use of the new Copilot Chat slash commands that make it simple to highlight a function and prompt it to help me create some test cases. It suggested using the React testing library for rendering, as well as some methods from Jest to simulate the passage of time and make sure the passing days are represented correctly. From there, I learned about the Jest framework’s Timer Mocks and best practices for testing for fake timers.

Without GitHub Copilot and this new chat feature, navigating a test framework and relying solely on their documentation would have taken even more time.

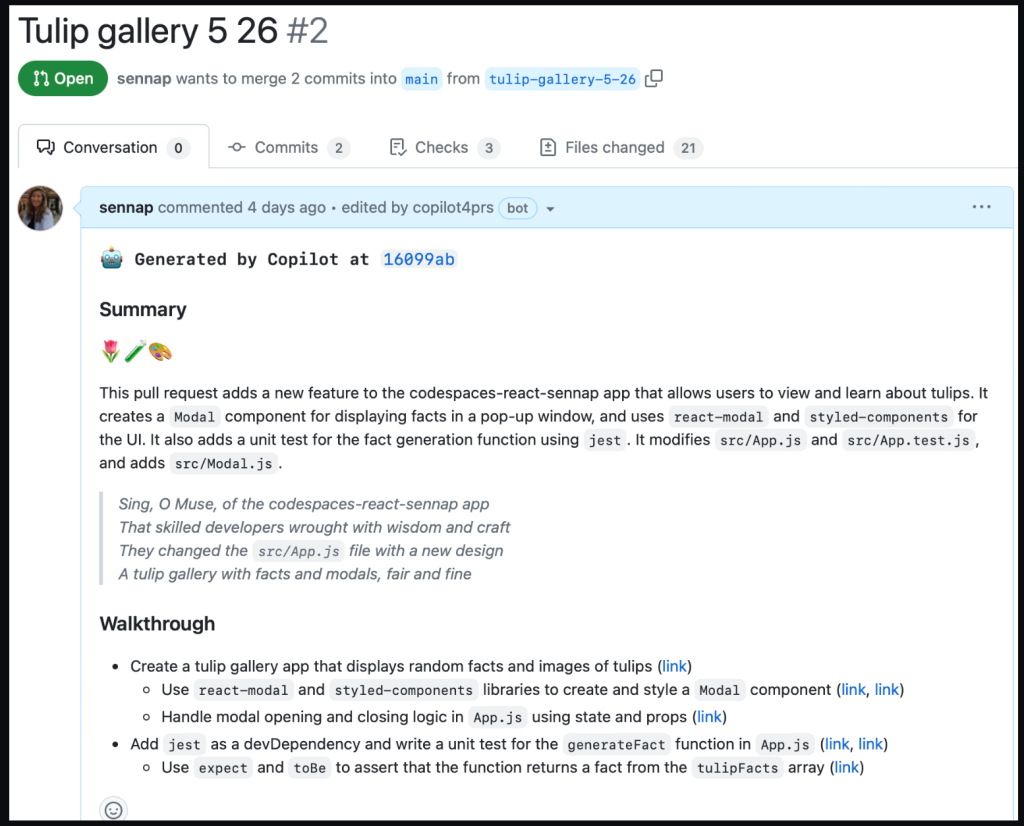

Summarizing my changes with GitHub Copilot for pull requests

Lastly, I used GitHub Copilot for pull requests to help summarize all the changes I made in a pull request. It gave me a summary of my changes, a walk through of each of the diffs relating to those changes, and even a poem about my application.

All of this is to show how Copilot Chat and GitHub Copilot for pull requests made the entire coding process much more enjoyable for me while working in an unfamiliar framework—from the initial idea phase to submitting a pull request.

Potential limitations and considerations

While the productivity increases for GitHub Copilot are amazing, there are valid concerns around the quality of code AI paired programming tools suggest and the danger of blindly trusting them. That’s why it’s important to remember that you, the developer, is ultimately the pilot. I think of using GitHub Copilot to be similar to pair programming with another developer: it helps me work faster, but I still need to verify the suggestions it’s giving me to ensure they meet my requirements.

While GitHub Copilot has numerous filters in place to avoid suggestions with vulnerabilities, it’s still important to review and test before deploying. As with any code you did not independently originate, you should ensure code suggestions go through proper code review, code security, and code quality channels to maintain the standards of your team.

Tags:

Written by

Related posts

GitHub for Beginners: Getting started with Git and GitHub in VS Code

Discover how to use VS Code to interact with GitHub and maintain your projects.

Dungeons & Desktops: 10 roguelikes that never die (because their communities won’t let them)

Roguelikes don’t die. They fork, mutate, get argued over, rewritten, abandoned, and revived again. Sometimes all at once.

GitHub for Beginners: Getting started with OSS contributions

Learn how to find opportunities to contribute to the open source community.