CodeQL team uses AI to power vulnerability detection in code

Learn how GitHub’s CodeQL leveraged AI modeling and multi-repository variant analysis to discover a new CVE in Gradle.

AI is fundamentally changing the technology and security landscape. At GitHub, we see AI as a way for developers to both speed up their development process and simultaneously write more secure code. For instance, GitHub Copilot includes a security filter that targets the most common vulnerable coding patterns in Python and JavaScript–including hardcoded credentials, SQL injections, and path injections–to prevent vulnerable suggestions from being made.

We’re also looking at ways security teams can use AI to enhance their organizations’ security posture, specifically leveraging prescriptive security intelligence to contextually assess, prioritize, visualize, and audit security posture in complex and interconnected and hybrid environments.

For example, our CodeQL team is responsible for creating models for frameworks/APIs to help CodeQL discover more vulnerabilities out of the box. Creating and testing these models is a time consuming process, so we started thinking about ways to use AI to help speed things up.

The results have been incredibly exciting; the team was able to leverage AI to optimize our modeling process and power the way we detect vulnerabilities in code.

How the CodeQL team discovered a new CVE using AI modeling

For CodeQL to produce results, we need to be able to recognize APIs as sources, sinks or propagators of untrusted user data also known as tainted data. The open source software (OSS) community has developed thousands of packages that potentially contain APIs that we need to recognize. Keeping up with these packages is critical because missing a source, a sink or a taint propagator could lead to false negatives.

Traditionally, we modeled the APIs manually, but this was incredibly time consuming for our team given the thousands of OSS frameworks. In the last six months, we’ve started using Large Language Models (LLMs) to automatically model APIs for us. This not only turbo charged our modeling efforts, but allowed CodeQL to recognize more sinks, reducing CodeQL’s false negative rate, and helping it detect more vulnerabilities.

When we make improvements to CodeQL, we often test them using a technique called variant analysis, which is a way to identify new types of security vulnerabilities. We often use this technique to run CodeQL queries across thousands of repositories hosted on GitHub.com. We did exactly that, and ran queries that use the AI-generated models across the most impactful repositories on GitHub.com.

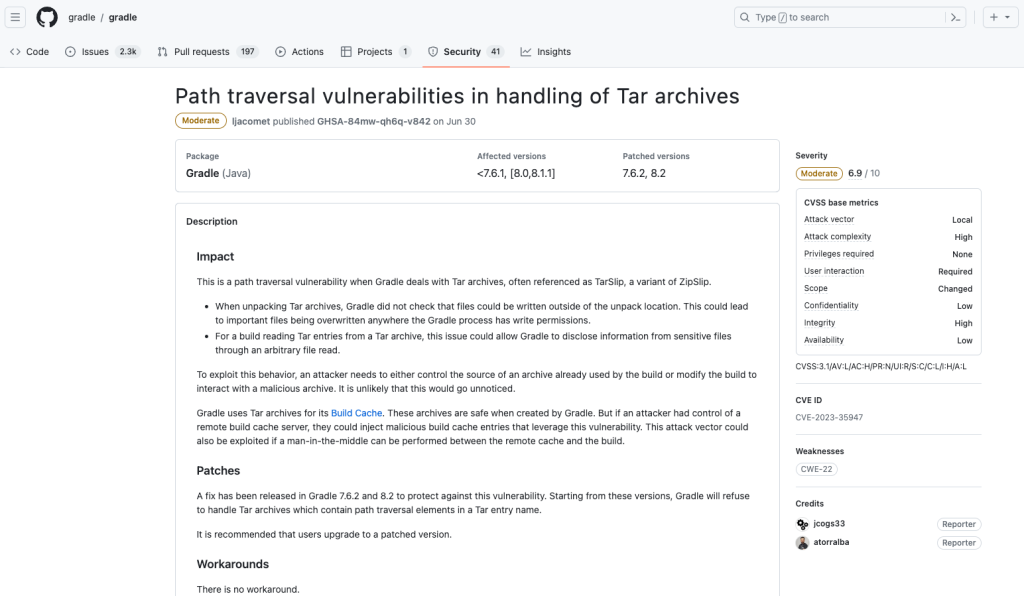

This combination of AI generated models and variant analysis led the team to discover a new CVE (CVE-2023-35947), a path traversal vulnerability in Gradle. For more information about the exact vulnerability, check out the entry on the Security Lab’s CodeQL Wall of Fame and the GitHub Advisory Database entry.

Learn more about multi-repository variant analysis

AI is fundamentally changing the way we secure our software. We will continue to strategically leverage AI to iterate and improve upon our security offerings with an eye towards bringing AI-powered security testing into your development workflows. The discovery of the CVE in Gradle is just one example of how GitHub’s security teams have been leveraging GitHub Advanced Security and AI to unlock incredible results.

In March this year, we shipped Multi-Repository Variant Analysis (MRVA) allowing you to perform variant analysis at scale. If you’re looking to get started with CodeQL and code scanning on your repository, check out our documentation. As always, CodeQL is free to use on open source repositories. If you’re interested in running CodeQL queries at scale, check out our multi-repository variant analysis documentation.

Tags:

Written by

Related posts

Investigation update: GitHub Enterprise Server signing key rotation

GitHub Enterprise Server customers need to take immediate action.

Raising the bar: Quality, shared responsibility, and the future of GitHub’s bug bounty program

We’re updating our bug bounty program standards to prioritize quality submissions, clarify shared responsibility boundaries, and evolve how we reward low-risk findings.

Securing the git push pipeline: Responding to a critical remote code execution vulnerability

How we validated, fixed, and investigated a critical vulnerability in under two hours, and confirmed no exploitation.