Gaurav Mittal

Principal Researcher, Microsoft Code | AI. I am a tech lead focused on product-driven AI research to improve the developer ecosystem and Github Copilot experience via intelligent and reliable models and agentic frameworks.

How to build the “Trust Layer” for GitHub Copilot cloud agent without brittle scripts or black-box judgements by using dominatory analysis.

Modern software testing is built on a fragile assumption: correct behavior is repeatable. For deterministic code, that assumption mostly holds. But for autonomous agents like Github Copilot cloud agent, especially as we explore the frontiers of integrated “Computer Use,” that assumption breaks down almost immediately.

As agents move beyond simple code suggestions to interacting with real environments like UIs, browsers, and IDEs, correctness becomes multi-path. Loading screens can appear or disappear, timing shifts, and multiple valid action sequences can lead to the same result. Unless our GitHub Actions workflows are robust enough to account for this variability, it’s common for an agent to succeed at a task while the test still fails—a “false negative” that halts production.

This blog post explores how to move past brittle, step-by-step scripts and toward an independent “Trust Layer” for agentic validation. We will demonstrate a model that focuses on essential outcomes rather than rigid paths, providing a way to validate behavior that is explainable, lightweight, and ready for real-world CI pipelines.

Imagine you’re responsible for a GitHub Actions pipeline that relies on Copilot cloud agent to validate real-world workflows. The agent could be leveraging Computer Use, navigating within a containerized cloud environment, for the workflow validation.

On Tuesday, the build is green. On Wednesday, the test fails—even though no code has changed.

Here’s what happened: A minor network lag on the hosted runner caused a loading screen to persist for a few extra seconds. The agent waited, adapted, and successfully completed the tasks correctly. But your CI pipeline still flagged the run as a failure—not because the task failed, but because the execution path no longer matched the recorded script or assertion timing.

The agent didn’t fail. The validation did.

This surfaces three recurring pain points that create a “trust gap” in agent-driven testing:

We’re in a transition period where agentic systems like Github Copilot Coding Agent are enabling faster development, but our traditional validation approaches remain rigid. In deterministic software, correctness is as simple as matching a specific input to a known output. But with agents, the process in between is intentionally non-deterministic. As agents are increasingly deployed in production, correctness isn’t about following a prescribed set of steps—it’s about “reliably achieving the essential outcomes.”

To scale these systems, we need a validation framework that can distinguish between “incidental noise” (e.g., a loading screen) and “critical failures” (e.g., failing to save data). Correctness shifts from “did this happen?” to “what had to happen for success to be real?”

Traditional testing tools work well when execution paths are fixed. They struggle when behavior branches—the tools begin to fracture, not because they’re poorly engineered, but because they assume a stable sequence.

When we apply these to a Copilot Coding Agent, including when navigating a containerized environment, the limitations become clear across four common paradigms:

While these approaches differ in implementation, they share a common structural assumption: Correctness is defined by adherence to a particular sequence of observable states.

For agentic systems, that assumption breaks down. To build true developer trust in these systems, including Github Copilot, we must move beyond checking linear scripts and start validating structured behaviors.

To move past brittle tests and build the Trust Layer, we have to fundamentally change how we define “correct.” In agentic systems, correct executions don’t have to look identical. They do need to share a common logical structure.

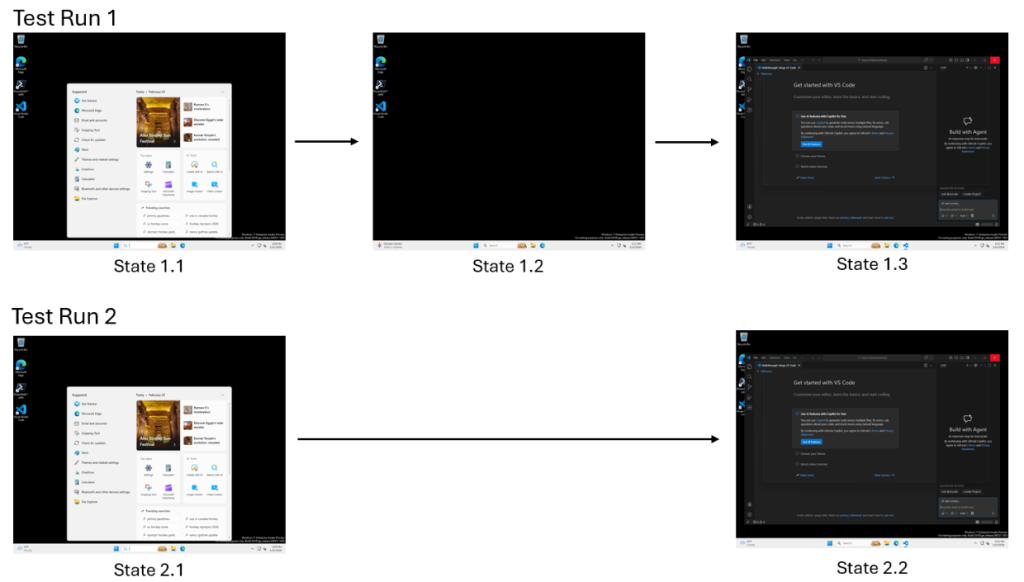

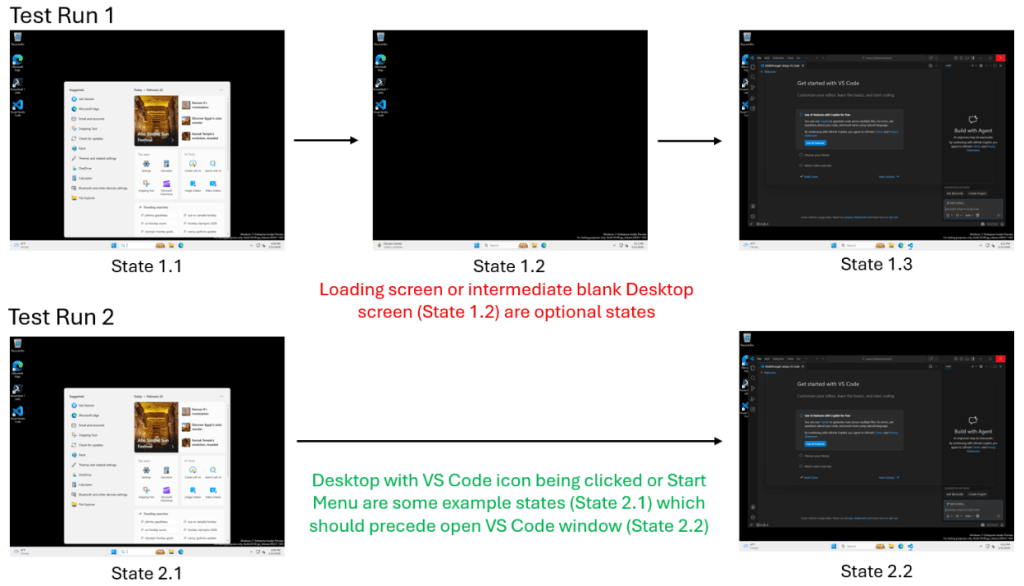

Think of a computer use-enabled Github Copilot Coding Agent performing a search in VS Code in a containerized cloud environment. In one run, a loading screen appears for several seconds; in another, the UI loads instantly (shown below).

A traditional test sees these as two different results. But to a developer, the loading screen is incidental; it doesn’t change whether the task was successful.

We can classify agent behavior into three categories:

A loading screen may appear or not. But search results must appear. Only one of these determines correctness.

The distinction between “must-have” and “incidental” behaviors is a concept rooted in compiler theory known as dominator relationships.

In a control-flow graph, a node A “dominates” node B if every path from the start to B must go through A.

By applying dominator analysis to agent execution traces, we can automatically identify:

This lets us extract a minimal, explainable definition of correctness.

To capture the complexity of agentic behavior, we must move away from treating executions as linear, one-dimensional scripts. Instead, our framework models behavior using a graph-based structure known as a Prefix Tree Acceptor (PTA).

In this model, an execution is not a series of commands but a directed graph where:

Treating executions as graphs allows us to represent branching and convergence—concepts that are impossible to capture in a linear script.

By shifting the representation from a sequence of steps to a structured behavior model, we stop penalizing agents for taking a different path and start validating whether they followed a logically sound one.

To move agents from experimental demos to production-grade infrastructure, our team developed a novel validation algorithm that moves away from rigid scripts and instead learns by example. To test this, we focused on a complex non-deterministic environment: an AI agent navigating Visual Studio Code via “Computer Use.” By observing just 2–10 successful sessions, our algorithm automatically constructs a “ground truth” model that distinguishes between an agent’s valid variations and actual failures.

This approach is uniquely powerful for developers because it requires no manual specification and no large-scale model training. Because the resulting model is a graph of actual execution states, the decisions are entirely explainable. When validation fails, our algorithm provides clear failure reasoning by identifying exactly which essential state was missed.

State equivalence is the hardest problem in agent validation. For example, how do we know if two different screenshots represent the same logical UI state?

We solve this using a three-tier equivalence detection framework that moves from fast visual metrics to deep semantic understanding:

This is not a naive pixel-by-pixel comparison, nor is it “LLM hand-waving” where the model is asked to judge the whole task. By using the LLM defensively and sparingly to resolve specific ambiguities, our framework remains robust enough to handle UI noise but precise enough to detect a real regression.

Once the various execution traces are merged into a unified graph, our algorithm applies dominator analysis to isolate the core skeleton of the task.

In our VS Code experiments, the “Search Dialog” state is identified as an essential milestone because it is a mathematical dominator—it is logically impossible to reach the results without first triggering the search. Conversely, a “Loading” screen dominates nothing; because it is bypassed in faster runs, the algorithm flags it as an optional variation rather than a requirement for success. This ensures the “Trust Layer” framework only alerts you when a critical step is missed—not when the environment fluctuates.

By extracting these essential nodes into a dominator subtree, we create a “ground truth” model that represents the minimal, explainable definition of correctness. This shifts the validation focus away from the specific steps the agent took and toward the critical checkpoints it was required to hit.

With the dominator tree established as our ground truth, validating a new, unseen execution becomes a process of structural comparison rather than a search for a perfect match. This ensures that as long as a cloud agent hits the “must-have” milestones, it is free to navigate the environment, or adapt its integrated Computer Use path, as it sees fit.

When a new execution trace arrives, our validation algorithm extracts its sequence of states and checks it against the dominator tree using topological subsequence matching.

Our framework produces more than just a binary pass/fail; it provides a coverage metric and a clear explanation:

This level of detail transforms the validation from a “black box” into a diagnostic tool that developers can actually use to debug their agents and their environments.

To prove the efficacy of this structural approach for Trust Layer, we conducted a controlled experiment comparing our Dominator Tree method against an agent’s self-assessment (where the Computer-Use Agent, or CUA, reports its own success) in a real-world scenario: a Copilot Agent custom VS Code extension test suite.

In tests designed to differentiate successful executions from those failing due to product bugs or agent errors, the results were definitive:

| Metric | CUA Self-Assessment | PTA (Dominator Tree) |

|---|---|---|

| Accuracy | 82.2% | 100% (+17.8) |

| Precision | 83.3% | 100% (+16.7) |

| Recall | 60.0% | 100% (+40.0) |

| F1-Score | 69.8% | 100% (+30.2) |

While the agent (CUA) frequently misreported failures as successes, often due to timing out or misinterpreting its own state, the Dominator Tree achieved perfect differentiation by focusing on whether essential milestones were actually reached.

The most significant impact for developers is in the reduction of “false alarms.” When a test fails, you need high-signal feedback to know if the product code is broken or if the agent simply stumbled due to environmental noise.

Structural validation beats self-reported success by a wide margin. By moving the “source of truth” from the agent’s internal logic to a learned external structure, we can significantly reduce the manual review time wasted on flaky test results and false positives in CI pipelines.

For this Trust Layer framework to be effective, it must move beyond a research prototype and integrate directly into the systems developers use every day. By treating correctness as a learned structure rather than a rigid script, we can significantly improve the reliability of production-grade automation within the GitHub ecosystem.

This approach is designed to strengthen several critical areas of the software development lifecycle:

The ultimate goal of this framework is to move agents from “experimental demos” to “production infrastructure.” By providing reasoning, where a failure clearly points to a missing essential state, we give developers the transparency they need to trust autonomous systems in their workflows.

While structural validation represents a significant leap forward, our current framework has a few boundaries as it moves toward full maturity.

Current limitations include:

Future work includes:

As AI agents move from experimental demos to core infrastructure, validation has to evolve with them and move past brittle scripts to resilient systems.

We don’t need black-box models to judge other black-box models. We need structural guarantees developers can inspect, reason about, and trust.

By combining classic compiler theory (i.e., dominator analysis) with multimodal AI, we’ve demonstrated that it’s possible to learn an explainable, robust definition of success from just a handful of examples. This framework for the Trust Layer provides:

As we move forward, focusing on these practical, explainable paths will be essential to ensuring that the GitHub Copilot Coding Agent is not just powerful, but also a trustworthy component of the developer workflow. This is particularly critical with the increasing adoption of Computer Use in the overall AI-native development lifecycle. By moving the “source of truth” from an agent’s internal logic to a learned external structure, we provide the guarantees needed to make autonomous agents viable, production-grade tools in modern infrastructure.

Our journey toward verifiable autonomy is just beginning. For a deep dive into our Dominator Analysis-based framework, you can read the complete paper.

We are committed to empowering every developer by building an open, secure, and AI-powered platform that defines the future of software development.

Kick off work in VS Code or the CLI, finish it from your phone. Remote control for GitHub Copilot sessions is now generally available on github.com and GitHub Mobile.

Learn about the experimental general-purpose accessibility agent that GitHub is piloting.