Meet Nodeload, the new Download server

I recently launched the first GitHub node.js project: nodeload. Nodeload replaces the ruby git archive download server. Basically, any time you download a tarball or zip file of any repository…

I recently launched the first GitHub node.js project: nodeload. Nodeload replaces the ruby git archive download server. Basically, any time you download a tarball or zip file of any repository branch or tag, you’re going through nodeload.

This is a challenging issue because we can’t just store downloads anywhere. Either we pre-build archives for every single branch and tag after every repo commit, or we generate each one fresh. Of course, there is a healthy hot cache of built archives to deal with popular requests.

In the old system, requests to download an archive would create a Resque job. This Resque job actually runs a git archive command on the archive server, pulling data from one of the file servers. Then, the original request sends you to a small ruby Sinatra app to wait for the job. It would essentially just check for the existence of a memcache flag before redirecting to the final download address. At the time, we were running about 3 Sinatra instances and 3 Resque workers.

This is a pragmatic solution when time and manpower are your constraints. I saw it as a great opportunity for Node.js. This is a simple feature that doesn’t depend on much of the site. In fact, the extra moving parts make it seem more complicated than it really is. The very nature of serving fresh git archives highlights the strengths of an event driven environment like Node.js, and the weaknesses of a blocking environment like Ruby.

However, I ran into a big roadblock right away: How do I even get the right file server for an archive? How can I authenticate whether a user has access to a private repo? Rather than reimplementing internal application logic, I went with a simple JSON API call. It returns the proper HTTP codes for missing or unauthorized repos, or a JSON object with the filesystem information. From there, nodeload spawns a git archive process, and redirects the user when it’s finished. This way I was able to use the existing logic for user permissions and repository paths without duplicating logic or loading the GitHub app in ruby.

This is just the first iteration of the archive server. My ultimate goal is to get an address like “/mxcl/homebrew/tarball/master” to serve archives without any redirects. But, this first step runs stable, using less memory than a single Sinatra instance. I’m not at the point where I want to write entire applications with Node.js, but it feels like a great fit for certain cases like this.

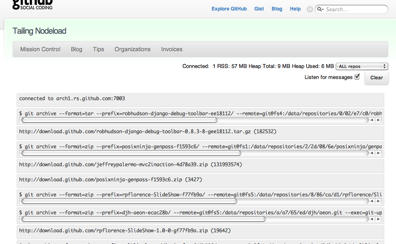

One of the other bonuses of using Node.js: a socket.io web socket server for monitoring:

Written by

Related posts

From pair to peer programmer: Our vision for agentic workflows in GitHub Copilot

AI agents in GitHub Copilot don’t just assist developers but actively solve problems through multi-step reasoning and execution. Here’s what that means.

GitHub Availability Report: May 2025

In May, we experienced three incidents that resulted in degraded performance across GitHub services.

GitHub Universe 2025: Here’s what’s in store at this year’s developer wonderland

Sharpen your skills, test out new tools, and connect with people who build like you.